This Time It’s Different

AI valuations are headed for a 50 to 70 percent correction. Scott Galloway called it. Here's why the bubble pops and the technology wins in the same crash.

Future-Proof

Trending

Ignorance Is Bliss

The state moved on AI this week — a toothless order on top, a populist revolt underneath. Everyone wants the steak. Nobody wants to watch the cow get butchered.

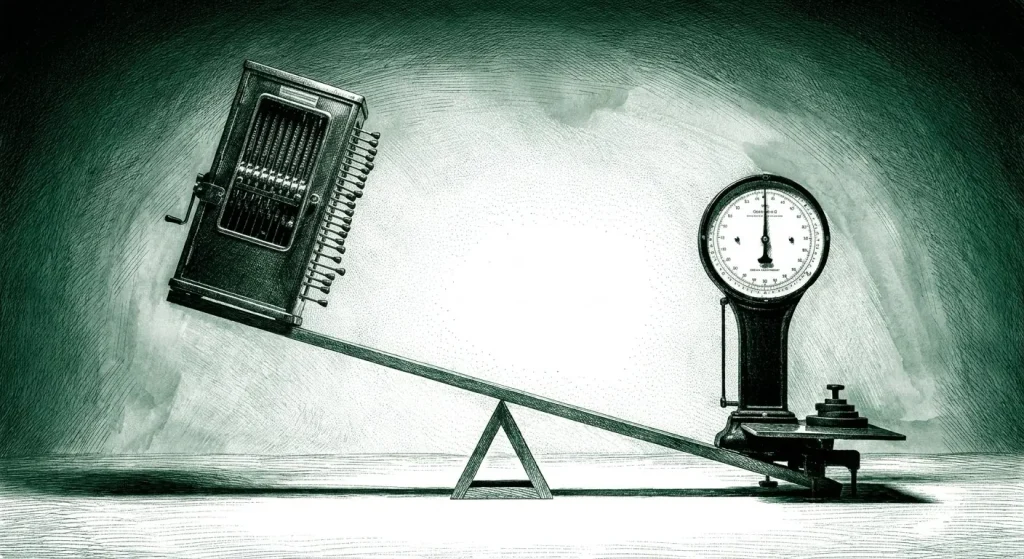

The Meter’s Running

Subsidized intelligence is over. The meter that finally priced the machine is turning toward the seat next to it.

Someone Made Fire

Anthropic filed the first big pure-play AI IPO this week. But the model is the lotion, not the cure. What they're really taking public is the operation.

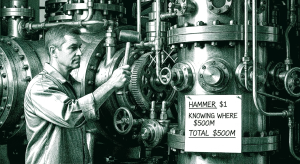

Knowing Where To Hit It

Two firms spent $500 million on AI last week. One did it by accident. The other did it to bury a moat no vendor could ever sell them.

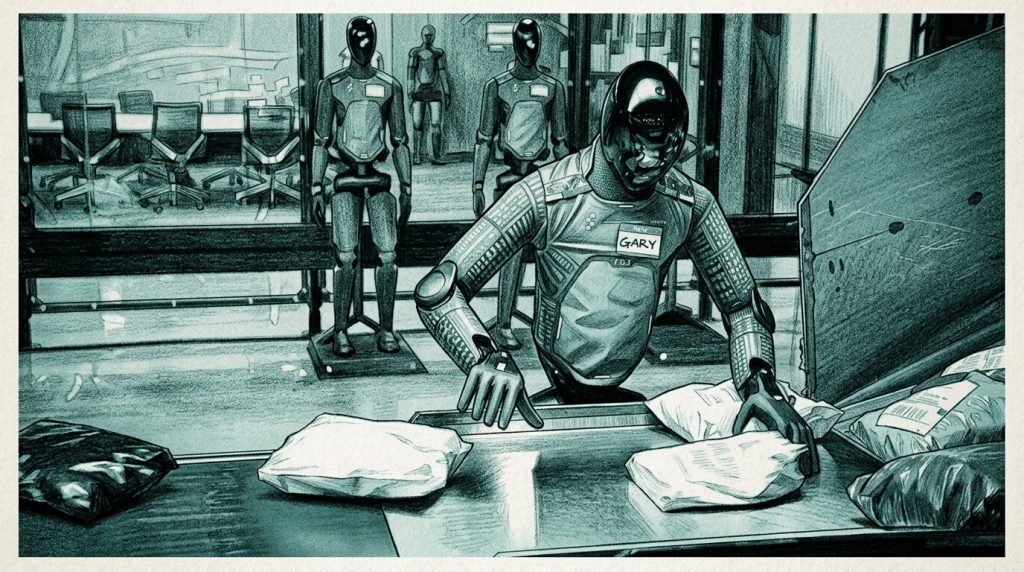

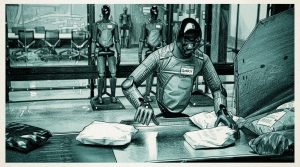

The Livestream That Made 543,000 People Realize We’re Cooked

I was one of the 543,000 people that watched robots work a warehouse shift on a live stream...

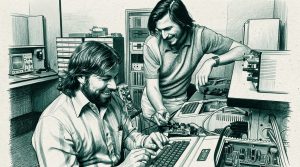

Apple’s Real Move and Why They Win The AI Race

I’ve been an Apple user since the Apple II. I remember the rainbow cable. I was in the...